Monitor dynamic tables¶

This topic describes how to view and monitor the dynamic tables in your pipelines. For guidance on what to look for when diagnosing performance issues, see Key performance indicators.

| Section | Description |

|---|---|

| List dynamic tables or view information on specific columns | List the dynamic tables in a schema and view information about them. |

| View the graph of tables connected to your dynamic tables | See the graph of tables connected to your dynamic tables. |

| Monitor your dynamic tables using SQL table functions | Monitor your dynamic tables using SQL table functions. |

| Monitor the refresh status for your dynamic tables | View the refresh status for your dynamic tables. |

List dynamic tables or view information on specific columns¶

To list the dynamic tables in a schema and view information about those dynamic tables, you can use either the following SQL commands or Snowsight, as long as you use a role that has the MONITOR privilege on the dynamic tables.

For more information, see Privileges to view a dynamic table’s metadata.

To list the dynamic tables in the current database (or in the account, if no database is currently in use), use the SHOW DYNAMIC TABLES command.

For example, to list the dynamic tables with names that start with product_ in the database mydb and schema myschema, execute

the following SQL statement:

To output information about the columns in a dynamic table, use the DESCRIBE DYNAMIC TABLE command.

For example, to list the columns in my_dynamic_table, execute the following SQL statement:

Dynamic tables are also included in the results of the TABLES view.

To list the dynamic tables in a schema and view information about a specific dynamic table, do the following:

- Sign in to Snowsight.

- In the navigation menu, select Catalog » Database Explorer.

- Select a database and schema.

- Select the Dynamic Tables tab or expand Dynamic Tables in the database object explorer.

- To view information about a specific dynamic table, select the dynamic table from the list of dynamic tables in the Dynamic Tables tab or from the database object explorer.

- The tabs in this page provide the following details about your selected dynamic table:

-

Table Details: Displays basic information about the dynamic table, including:

-

The scheduling state of your dynamic table.

-

The last refresh status of your dynamic table. For failed refreshes, you can see more information about the error if you hover over the status.

-

The current and target lag for your dynamic table.

-

Whether incremental refreshes or full refreshes are used to update the table.

-

The definition of the dynamic table.

-

The tags for the dynamic table.

-

The privileges granted for working with the dynamic table.

-

- Columns: Information about the columns in the dynamic table.

- Data Preview: A preview of up to 100 rows of the data in the dynamic table.

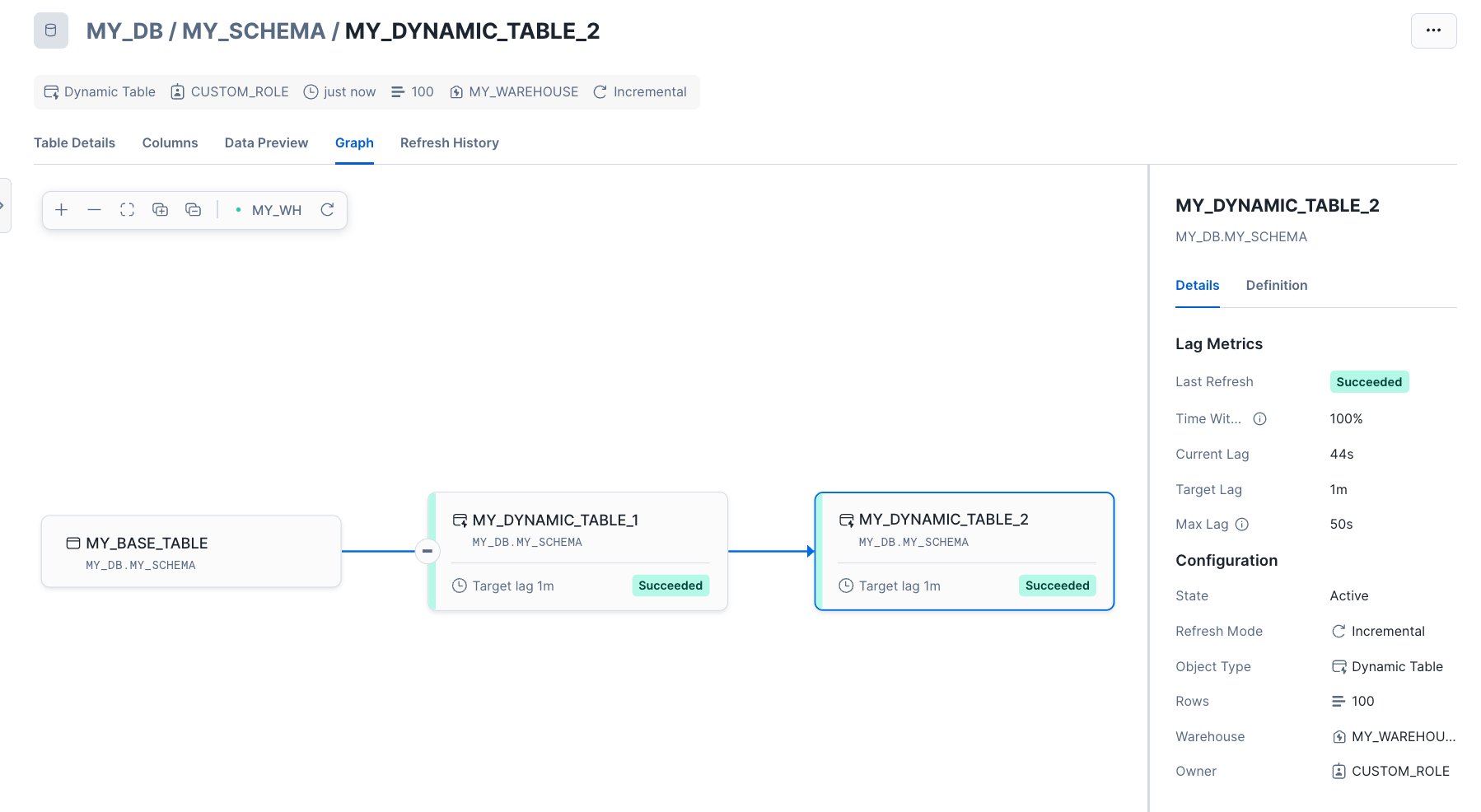

- Graph: Displays the directed acyclic graph (DAG) that includes this dynamic table.

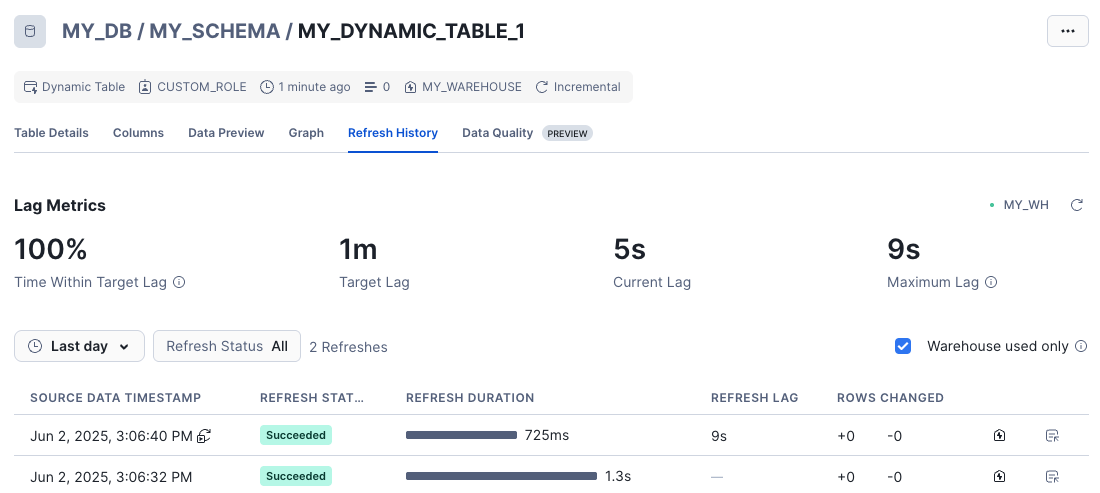

- Refresh History: Displays the history of refreshes and the lag metrics.

View the graph of tables connected to your dynamic tables¶

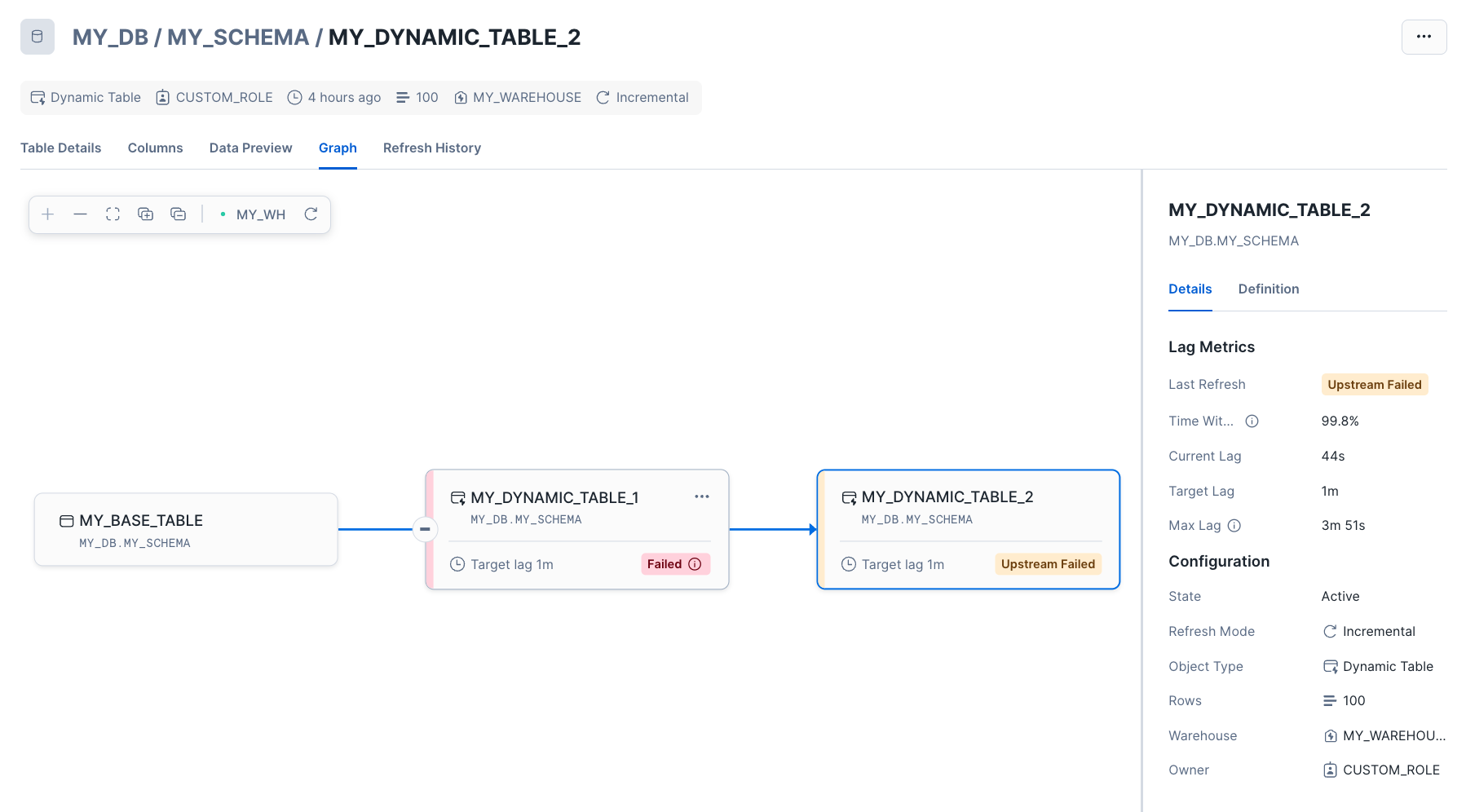

Viewing dependencies is particularly useful for troubleshooting dynamic table chains. In Snowsight, you can visualize which dynamic tables a given dynamic table depends on using the lineage graph. For example, you can identify the following:

- Upstream dependencies where a dynamic table pulls data from.

- Downstream dependencies that might be impacted by changes to a dynamic table.

Dependencies can impact refresh performance. For example, suppose your dynamic table’s upstream table has a large data load added just before its scheduled refresh. Your dynamic table will wait for it to finish the refresh, causing it to miss its target lag. In the lineage graph, you’d see the input table marked as “executing,” indicating the delay.

To view the graph of a particular dynamic table, do the following:

- Sign in to Snowsight.

- In the navigation menu, select Transformation » Dynamic tables.

- Select your dynamic table. The Graph view is displayed by default. This displays the graph with the node for the dynamic table selected. The Details pane on the right displays information about its lag metrics and configuration.

- To display the details of a different table in the graph, select that table.

To update the graph, select the refresh button in the bar above the graph.

If a refresh failed due to an UPSTREAM_FAILED error code, you can use the graph to visualize which upstream table caused the failure.

To view the full details of a table in the graph, see List dynamic tables or view information on specific columns.

Monitor your dynamic tables using SQL table functions¶

Use the following INFORMATION_SCHEMA table functions to monitor your dynamic tables:

-

DYNAMIC_TABLES: Returns metadata about your dynamic tables, including aggregate lag metrics and the status of the most recent refreshes, within seven days of the current time.

-

DYNAMIC_TABLE_REFRESH_HISTORY: Returns information about each completed and running refresh of your dynamic tables, including refresh status and trigger, and the target lag.

- DYNAMIC_TABLE_REFRESH_HISTORY view: This Account Usage view also displays information for dynamic table refresh history. It is useful for debugging issues that are for longer than the DYNAMIC_TABLE_REFRESH_HISTORY table function’s data retention time (seven days).

-

DYNAMIC_TABLE_GRAPH_HISTORY: Returns information that provides the history of each dynamic table, its properties, and its dependencies on other tables and dynamic tables.

You can use this table function to get a snapshot of the dependency tree of dynamic tables at a given point in time.

The output also reflects the changes made to the properties of a dynamic table over time. Each row represents a dynamic table and a specific set of properties. If you change a property of a dynamic table (for example, the target lag), the function returns the most up to date property.

Dynamic tables in ACCESS_

Dynamic table refresh operations are recorded in the ACCESS_HISTORY view:

- Refresh reads: The base tables read during a refresh appear in the

base_objects_accessedfield of the ACCESS_HISTORY record. - Refresh writes: The dynamic table being refreshed appears in

object_modified_by_ddlas a DDL ALTER operation withdynamicTableAction = 'REFRESH'. - User queries: SELECT queries against a dynamic table are recorded as standard table access.

Monitor the refresh status for your dynamic tables¶

This section explains how to view the refresh status of all or specific dynamic tables.

- For guidance on what to look for when diagnosing slow refreshes, see Key performance indicators.

- For troubleshooting skipped or failed refreshes, see Diagnosing common dynamic table refresh issues.

Monitor the refreshes for all your dynamic tables¶

You can use Snowsight or the DYNAMIC_TABLES table function to view the refresh status for all your dynamic tables.

Sign in to Snowsight. In the navigation menu, select Transformation » Dynamic tables.

You can view the state and last refresh status for all your dynamic tables on this page. You can also filter by database or schema to narrow the results.

DYNAMIC_TABLES provides information about all of the dynamic tables in your account.

The following example retrieves the information about the state and target lag for all dynamic tables in the account and their associated database and schema.

The following example retrieves the state and information about each state for refresh for all dynamic tables in the account.

Monitor all the refreshes for a specific dynamic table¶

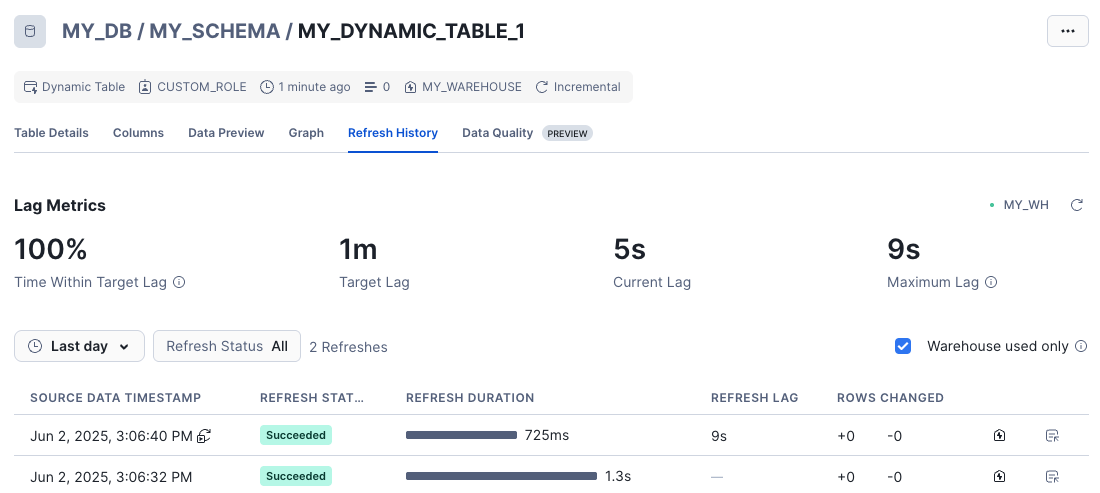

You can use Snowsight or the DYNAMIC_TABLE_REFRESH_HISTORY table function to view the refresh history for a given dynamic table.

-

Sign in to Snowsight.

-

In the navigation menu, select Transformation » Dynamic tables.

-

Select your dynamic table and then go to the Refresh History tab.

This page displays your dynamic table’s refresh history, which includes information about each refresh’s status, duration, and actual lag time, and the number of rows changed with each refresh.

It also displays your dynamic table’s lag metrics, which includes the percentage of the time within the target lag and the longest actual lag time during the given interval.

To view the refresh history for a specific dynamic table, use the DYNAMIC_TABLE_REFRESH_HISTORY table function.

For example, if you want to view the refresh history for all the dynamic tables in the my_db database and my_schema schema, execute

the following statement:

To filter for refreshes that had errors, pass in the argument ERROR_ONLY => TRUE. For example:

Event table monitoring and alerts for dynamic tables¶

This topic discusses how to query an event table that provides information about your refresh status and how to set up alerts on new data in an event table.

Query an event table to monitor refreshes¶

When a dynamic table is refreshed, you can configure Snowflake to record an event that provides information about the status of the refresh operation. The event is recorded in the active event table associated with the dynamic table.

For example, suppose that you have associated an event table with a database. When a dynamic table in that database is refreshed, Snowflake records an event to that event table.

You can query the events logged in this active event table to monitor your dynamic table refreshes.

For example, to get the timestamp, dynamic table name, query ID, and error message for errors with dynamic tables in the database my_db,

do the following:

The following example retrieves all columns for upstream errors with dynamic tables in the schema my_schema:

For information about the role that you need to use to query the event table and the conditions that you can use to filter the results, see Set up an alert on new data.

Set up alerts on new data to monitor refreshes¶

As mentioned earlier, when a dynamic table is refreshed, an event is logged in the event table to indicate whether the refresh succeeded or failed. You can set up an alert on new data to monitor the event table. You can configure the alert to send a notification when a refresh fails.

The next sections explain how to set up the event logging to capture the events, how to set up the alert, and how to interpret the events recorded in the event table:

- Set the severity level of the events to capture

- Set up an alert on new data

- Information logged for dynamic table events

Note

Logging events for dynamic tables incurs costs. See Costs of telemetry data collection.

Set the severity level of the events to capture¶

Note

If you do not set the severity level, no events will be captured.

To set up dynamic table events to be recorded to the event table, set the severity level of events that you want captured in the event table. Events are captured at the following levels:

ERROR: Refresh failure events.WARN: Failures to refresh upstream dynamic tables and refresh failure events.INFO: Successful refresh events, failures to refresh upstream dynamic tables, and refresh failure events.

To set the level, set the LOG_EVENT_LEVEL parameter for the account or object. You can set the level for:

- All objects in the account.

- All objects in a database or schema.

- A specific dynamic table.

For example:

-

To capture ERROR-level dynamic table events for all supported objects in the account, execute ALTER ACCOUNT SET LOG_EVENT_LEVEL:

Setting

LOG_EVENT_LEVELat the account level applies to log events (record type EVENT) for supported workloads in the account, including dynamic tables. It does not replace LOG_LEVEL for log messages from logging APIs. For more information, see Parameters. -

To capture INFO-level events for all supported objects in the database

my_db, execute ALTER DATABASE … SET LOG_EVENT_LEVEL:Similar to the case of setting the level on the account, setting the level on the database affects log events for supported object types in the database.

-

To capture WARN-level events for the dynamic table

my_dynamic_table, execute ALTER DYNAMIC TABLE … SET LOG_EVENT_LEVEL:

Set up an alert on new data¶

After you set the severity level for logging events, you can set up an alert on new data to monitor the event table for new events that indicate a failure in a dynamic table refresh. An alert on new data is triggered when new rows in the event table are inserted and meet the condition specified in the alert.

Note

To create the alert on new data, you must use a role that has been granted the required privileges to query the event table.

-

If the alert condition queries the default event table (SNOWFLAKE.TELEMETRY.EVENTS) or the predefined view (SNOWFLAKE.TELEMETRY.EVENTS_VIEW view), see Roles for access to the default event table and EVENTS_VIEW.

To manage access to the EVENTS_VIEW view, see Manage access to the EVENTS_VIEW view.

-

If the alert condition queries a custom event table, see Access control privileges for event tables.

To manage access to a custom event table, see Managing access to event table data.

In the alert condition, to query for dynamic table events, select rows where

resource_attributes:"snow.executable.type" = 'DYNAMIC_TABLE'. To narrow down the list of events, you can filter on the

following columns:

- To restrict the results to dynamic tables in a specific database, use

resource_attributes:"snow.database.name". - To return events where the refresh failed due to an error with the dynamic table, use

value:state = 'FAILED'. - To return events where the refresh failed due to an error with an upstream dynamic table, use

value:state = 'UPSTREAM_FAILURE'.

For information on the values logged for a dynamic table event, see Information logged for dynamic table events.

Note

The timestamp column in the event table stores values in UTC. If you use a scheduled alert with a timestamp filter

(for example, timestamp > DATEADD('minute', -5, CURRENT_TIMESTAMP())), convert the current timestamp to UTC to ensure

accurate comparisons:

For example, the following statement creates an alert on new data that performs an action when refreshes fail for dynamic tables

in the database my_db. The example assumes that:

- Your active event table is the default event table (SNOWFLAKE.TELEMETRY.EVENTS).

- You have set up a webhook notification integration for that Slack channel.

Information logged for dynamic table events¶

When a dynamic table refreshes, an event is logged to the event table. The following sections describe the event table row that represents the event:

Event table column values¶

When a dynamic table refreshes, a row with the following values is inserted into the event table.

Note

If a column is not listed below, the column value is NULL for the event.

| Column | Data type | Description |

|---|---|---|

timestamp | TIMESTAMP_NTZ | The UTC timestamp when an event was created. |

observed_timestamp | TIMESTAMP_NTZ | A UTC time used for logs. Currently, this is the same value that is in the timestamp column. |

resource_attributes | OBJECT | Attributes that identify the dynamic table that was refreshed. |

record_type | STRING | The event type, which is EVENT for dynamic table refreshes. |

record | OBJECT | Details about the status of the dynamic table refresh. |

value | VARIANT | The status of the dynamic table refresh and, if the refresh failed, the error message for the failure. |

Key-value pairs in the resource_attributes column¶

The resource_attributes column contains an OBJECT value with the following key-value pairs:

| Attribute name | Attribute type | Description | Example |

|---|---|---|---|

snow.database.id | INTEGER | The internal/system-generated identifier of the database containing the dynamic table. | 12345 |

snow.database.name | VARCHAR | The name of the database containing the dynamic table. | MY_DATABASE |

snow.executable.id | INTEGER | The internal/system-generated identifier of the dynamic table that was refreshed. | 12345 |

snow.executable.name | VARCHAR | The name of the dynamic table that was refreshed. | MY_DYNAMIC_TABLE |

snow.executable.type | VARCHAR | The type of the object. The value is DYNAMIC_TABLE for dynamic table events. | DYNAMIC_TABLE |

snow.owner.id | INTEGER | The internal/system-generated identifier of the role with the OWNERSHIP privilege on the dynamic table. | 12345 |

snow.owner.name | VARCHAR | The name of the role with the OWNERSHIP privilege on the dynamic table. | MY_ROLE |

snow.owner.type | VARCHAR | The type of role that owns the object, for example | ROLE |

snow.query.id | VARCHAR | ID of the query that refreshed the dynamic table. | 01ba7614-0107-e56c-0000-a995024f304a |

snow.schema.id | INTEGER | The internal/system-generated identifier of the schema containing the dynamic table. | 12345 |

snow.schema.name | VARCHAR | The name of the schema containing the dynamic table. | MY_SCHEMA |

snow.warehouse.id | INTEGER | The internal/system-generated identifier of the warehouse used to refresh the dynamic table. | 12345 |

snow.warehouse.name | VARCHAR | The name of the warehouse used to refresh the dynamic table. | MY_WAREHOUSE |

Key-value pairs in the record column¶

The record column contains an OBJECT value with the following key-value pairs:

| Key | Type | Description | Example |

|---|---|---|---|

name | VARCHAR | The name of the event. The value is refresh.status for dynamic table refreshes. | refresh.status |

severity_text | VARCHAR | The severity level of the event, which is one of the following values:

| INFO |

Key-value pairs in the value column¶

The value column contains an VARIANT value with the following key-value pairs:

| Key | Type | Description | Example |

|---|---|---|---|

state | VARCHAR | The state of the refresh, which can be one of the following values:

| SUCCEEDED |

message | VARCHAR | If the value in state is FAILED, this column includes the error message. | SQL compilation error:\nFailure during expansion of view 'MY_DYNAMIC_TABLE': SQL compilation error:\nObject 'MY_DB.MY_SCHEMA.MY_BASE_TABLE' does not exist or not authorized. |

Query pipeline spans to trace refreshes¶

In addition to events, Snowflake can record pipeline spans for dynamic table refreshes. Events and spans are two separate observability mechanisms:

- Events (controlled by LOG_LEVEL) provide logs per-dynamic-table refresh, indicating whether each refresh succeeded or failed.

- Spans (controlled by TRACE_LEVEL) provide richer pipeline-level observability, including correlated trace IDs across a pipeline, skip reasons, and dependency topology.

Spans capture additional states for which events are not emitted, including SKIPPED refreshes due to upstream

skips or refresh cycles where the scheduler skipped refreshing to minimize the lag of the dynamic table and

its consumers.

Note

Recording spans for dynamic tables incurs costs. See Costs of telemetry data collection.

Enable pipeline spans¶

To enable pipeline spans for dynamic table refreshes, set the TRACE_LEVEL parameter to ALWAYS at the

database or schema level:

You can also set this at the database level to capture spans for all dynamic tables in the database:

Query span data¶

To query pipeline spans for dynamic table refreshes, filter for rows where record_type = 'SPAN' and

record:"name" = 'table_refresh':

Span attributes (record_attributes)¶

Each span row includes the following attributes in the record_attributes column, specific to dynamic

table refreshes:

| Attribute name | Type | Description |

|---|---|---|

snow.dynamic_table.state | STRING | The state of the refresh: SUCCEEDED, FAILED, or SKIPPED. |

snow.dynamic_table.state_reason | STRING | Why the dynamic table was skipped or failed. NULL on success. Possible values:

|

snow.dynamic_table.data_timestamp | STRING | The transactional timestamp when the refresh was evaluated. (This might be slightly before the actual time of the refresh.) All data in base objects that arrived before this timestamp is included in the dynamic table. |

Note

Spans cover SKIPPED states (with reasons UPSTREAM_SKIP and NOT_EFFECTIVE_TICK_TO_REFRESH)

for which events are not emitted. If you need visibility into skipped refreshes, use spans instead of events.

Pipeline correlation with trace IDs and span links¶

A unique capability of spans is pipeline-level correlation. When a refresh cycle includes refresh operations

for multiple dynamic tables, all the resulting spans share the same trace:"trace_id". This lets you

reconstruct the full set of refresh operations that occurred in a single refresh cycle.

Each span also includes a record:"links" array that lists the span_id of each upstream dependency.

For example, if DT_B depends on DT_A, then DT_A’s span_id appears in DT_B’s

record:"links".

The record:"status":"code" field is STATUS_CODE_OK for successes and skips, and

STATUS_CODE_ERROR for failures.

For example, to correlate all dynamic table refresh operations in a single refresh cycle, query for spans

with the same trace_id:

Trace a pipeline refresh¶

This section walks through how to use pipeline spans to trace a refresh cycle end to end: finding the relevant spans, retrieving the full pipeline, and diagnosing failures or skips.

Example pipeline scenario¶

Consider a linear pipeline of four dynamic tables:

In this example, DT1 and DT2 refresh successfully, but DT3 fails due to a query error. Because

DT3 failed, DT4 is automatically skipped with the reason UPSTREAM_FAILURE.

The following steps show how to retrieve and interpret the pipeline spans for this scenario.

Step 1: Find the span for a dynamic table¶

To investigate a specific dynamic table’s refresh, query the event table for its most recent span. Filter by database, schema, and dynamic table name to ensure you match the correct object:

The trace_id value identifies the refresh cycle. All dynamic table spans within a single pipeline refresh

share the same trace_id. Use this value in the next step to retrieve

all spans from the same refresh cycle.

Step 2: Retrieve the full pipeline¶

Query all spans that share the same trace_id to see every dynamic table in the refresh cycle.

Include record:"links" to capture the dependency graph and DATEDIFF to compute the duration of each

refresh operation:

From this result, you can see the full picture of the refresh cycle:

DT1andDT2succeeded (30 and 29 seconds respectively).DT3failed after 19 seconds due to a query failure.DT4was skipped immediately (represented by a zero-duration span) because its upstream dependency failed.- The

UPSTREAM_LINKScolumn shows each dynamic table’s direct dependencies byspan_id.

Step 3: Identify the root cause of a failure or skip¶

When a dynamic table is skipped or fails, you can trace its upstream dependencies through the span links to find the root cause. This query resolves the span links for a specific dynamic table back to the other spans in the pipeline:

In this example, DT4 was skipped because its upstream dependency DT3 failed with

QUERY_FAILURE. You can use the query_id to investigate the failed query further (for example,

by calling GET_QUERY_OPERATOR_STATS or

checking the query history).

For longer dependency chains, repeat the same pattern: replace the target dynamic table name to walk

further upstream until you reach a span with state = 'FAILED' and state_reason = 'QUERY_FAILURE',

which is the root cause.

Find downstream impact of a failure¶

To find which dynamic tables were affected by a specific failure, reverse the span link lookup. This query

finds all dynamic tables whose record:"links" reference the failed dynamic table’s span_id:

This returns the direct dependents of the failed dynamic table. To find all transitively affected dynamic

tables, repeat the query with each dependent’s span_id to walk further downstream.

Use OpenTelemetry-compatible tools¶

Dynamic table pipeline spans follow the standard OpenTelemetry data model. Because all spans in a refresh

cycle share the same trace:"trace_id", you can export them from the event table into

OpenTelemetry-compatible tools for visualization.

These tools can render the pipeline as a trace timeline, showing the duration and status of each dynamic table’s refresh operation and the dependency relationships encoded in the span links.

Monitor dynamic table performance¶

Performance monitoring helps you with the following tasks:

- Identify slow or costly dynamic table refreshes.

- Diagnose bottlenecks.

- Measure the impact of optimizations.

This topic explains what to look for to monitor dynamic table performance and how to diagnose issues. For information about monitoring tools, see Monitor your dynamic tables using SQL table functions.

Tip

For a hands-on example, see Optimize queries for incremental refresh.

Key performance indicators¶

To monitor dynamic table performance, focus on the metrics described in this section.

Refresh duration¶

Refresh duration measures how long each refresh takes to complete. To spot performance degradation, track refresh duration over time.

Warning signs:

- Duration increases over time: Growing data volumes or degrading data locality can cause refresh times to steadily increase.

- Duration approaches target lag: When refreshes take nearly as long as your target lag, you might not meet data freshness requirements.

- High variance in duration: Large swings in refresh time might indicate workload spikes or resource contention.

To view refresh duration, see Monitor the refresh status for your dynamic tables.

Lag metrics¶

Lag metrics show how well your dynamic table meets its freshness target. For information about how target lag works, see Understanding dynamic table target lag.

Key metrics:

- Actual lag: The time between when source data changed and when the dynamic table reflected those changes.

- Time within target lag ratio: The percentage of time a table stayed within its target lag. A ratio below one indicates that the pipeline isn’t meeting its freshness goal.

- Maximum lag: The longest actual lag during a given period.

To view lag metrics, see Monitor the refresh status for your dynamic tables.

Partition statistics¶

For incremental refreshes, the number of partitions scanned should be proportional to the data that changed, not the total table size. High partition scans indicate poor data locality.

Warning signs:

- Scanning a large percentage of total partitions during incremental refresh.

- Partition scans increasing over time without corresponding data growth.

To view partition statistics, see Analyze query profiles.

For guidance on improving data locality, see Improve data locality.

Refresh mode¶

The refresh mode directly affects performance. Verify that your dynamic table uses the expected mode.

To check refresh mode, use SHOW DYNAMIC TABLES and review the

refresh_mode and refresh_mode_reason columns. In Snowsight, view the

refresh mode in the object header.

For guidance on choosing the right refresh mode, see Choose a refresh mode.

Diagnose slow refreshes¶

When refreshes take longer than expected, follow these steps to identify the cause:

-

Check the refresh history for trends in refresh duration, such as gradual increases or sudden spikes (Monitor the refresh status for your dynamic tables).

-

Review the query profile to identify bottlenecks (Analyze query profiles):

- High partition scans suggest poor data locality.

- Bytes spilled suggest that the warehouse is too small.

- Specific operators taking a long time might indicate an opportunity to optimize your dynamic table query.

-

Check whether lag consistently exceeds your target, which indicates that refreshes might not keep up with your data volume (Monitor the refresh status for your dynamic tables).

-

Review upstream dependencies to check whether upstream tables cause delays or produce large volumes of changes.

In the Graph view in Snowsight, look for the following conditions:

- Upstream tables executing a refresh (shown with

executingstatus). - Failed or suspended upstream tables.

- Upstream tables taking longer than usual to refresh.

To access the Graph view, see View the graph of tables connected to your dynamic tables.

- Upstream tables executing a refresh (shown with

-

Check the volume of changes that the dynamic table processes, because large volumes of changes from upstream dependencies can slow down refreshes.

Use the DYNAMIC_TABLE_REFRESH_HISTORY function to see how many rows changed in recent refreshes:

When change volume is high relative to total table size (more than five percent of the table rows), consider using full refresh mode instead.

Common patterns and recommended actions¶

- Refresh duration is stable, but lag is high: Your target lag is probably too aggressive for the current warehouse size and data volume. Refreshes finish successfully but can’t keep up with incoming changes. Check whether your target lag and warehouse resources match your data volume.

- Refresh duration suddenly spikes and bytes spilled are high: The warehouse doesn’t have enough memory to process the refresh, either because the warehouse is too small or because other queries are running at the same time. Increase the warehouse size or move dynamic table refreshes to a dedicated warehouse.

- Partition scans increase over time, but data volume stays the same: Your data locality is poor, which forces Snowflake to scan more partitions than necessary. Check your clustering keys and data locality. Also check whether upstream changes affect many scattered partitions instead of a few contiguous ones.

- Each refresh processes a large portion of the table (more than five percent of rows or partitions): Incremental refresh provides little benefit when most of the table changes frequently. Switch to full refresh mode or redesign your pipeline to reduce the amount of data that changes with each refresh.

Based on your findings, apply appropriate fixes from Optimize queries for incremental refresh.

Note

Skipped or failed refreshes are typically caused by configuration issues, not performance problems. See Diagnosing common dynamic table refresh issues.

Analyze query profiles¶

The query profile shows detailed execution statistics for each refresh. When a refresh is slow, the query profile helps you identify opportunities for optimization.

To access the query profile:

- Navigate to Transformation » Dynamic Tables.

- Select the dynamic table and go to the Refresh History tab.

- Select Show query profile next to the refresh you want to analyze.

First, get the query ID from refresh history:

Then analyze the query profile with the GET_QUERY_OPERATOR_STATS function:

What to look for¶

- Partitions scanned vs. pruned: When partition scans are high relative to the total number of partitions, the cause is usually poor data locality or missing clustering.

- Time distribution: Check which operators consume the most time. Operators that take disproportionately long might indicate an opportunity to optimize your query. See Optimize queries for incremental refresh for operator-specific guidance.

- Bytes spilled to local or remote storage: High bytes spilled often indicate that the warehouse is too small for the refresh workload or that other queries running on the same warehouse reduce the memory available for refreshes. Consider increasing the warehouse size or running dynamic table refreshes on a dedicated warehouse to reduce contention.

For more guidance on how to address issues found in the query profile, see Optimize queries for incremental refresh.

Monitor warehouse usage¶

To check whether your warehouse can handle your dynamic table workload and find ways to reduce costs, monitor warehouse usage.

Key metrics to monitor¶

- Bytes spilled: Bytes spilled to local or remote storage means that the warehouse might be too small. Consider increasing warehouse size. For more details on identifying and troubleshooting bytes spilled, see Finding queries that spill to storage.

- Warehouse utilization: Check whether the warehouse has enough resources for refresh workloads. Low utilization means you might have an oversized warehouse. High queue times mean your warehouse is too small or runs too many concurrent queries.

- Query queuing: Queued queries delay refreshes. If refreshes frequently queue, increase warehouse size, use a dedicated warehouse for dynamic table refreshes, or consider a multi-cluster warehouse to handle variable workloads.

- Credit usage: Track credits to balance performance with costs. Monitor regularly to find opportunities to right-size warehouses or adjust refresh schedules.

To view warehouse usage and queue times, see Reducing queues. Optimize warehouse configuration for dynamic tables with Optimize queries for incremental refresh.

Monitor dependencies¶

Dependencies between dynamic tables can affect performance. Performance issues in upstream tables cascade to downstream tables because a downstream table must wait for upstream tables to complete their refreshes before it can start its own refresh.

To diagnose performance issues related to upstream dependencies, see Diagnose slow refreshes.

To view the graph of dependencies, see View the graph of tables connected to your dynamic tables.

Set up alerts for performance issues¶

You can set up alerts to notify you when performance degrades. Consider creating alerts for the following conditions:

- Refresh duration exceeds a threshold.

- Lag consistently misses the target.

Alerts use event tables to track refresh events. For setup instructions, see Set up alerts on new data to monitor refreshes.