Understanding primary keys in dynamic tables¶

Snowflake can use primary keys in dynamic tables and dynamic iceberg tables to track row-level changes more efficiently and to enable incremental refresh downstream of full refresh dynamic tables. Instead of relying on change-tracking columns, Snowflake uses primary keys as stable row identifiers to compute the minimal set of changes between refreshes.

This is especially useful in the following scenarios:

- Base tables are periodically rewritten through INSERT OVERWRITE rather than updated in place, which normally prevents Snowflake from detecting what changed between versions.

- The pipeline reads from an externally-managed Apache Iceberg™ v2 table, which precludes row lineage.

- Some dynamic tables must use full refresh mode because of unsupported incremental constructs, but downstream tables would benefit from incremental processing.

Types of primary keys in dynamic tables¶

Snowflake supports two types of primary key use cases for dynamic tables: 1) row-level change tracking and 2) derivation of a unique key for the dynamic table itself.

Primary key row-level lineage-based change tracking¶

When a base table has a primary key constraint with the RELY property set, Snowflake

uses that key for row-level change tracking in downstream dynamic tables. This is particularly

useful when the base table is periodically rewritten through INSERT OVERWRITE, which normally

prevents change tracking across table versions.

With a reliable primary key, Snowflake identifies which rows changed between refreshes by comparing primary key values instead of relying on internal change-tracking columns. This enables incremental processing even when the underlying data is fully replaced.

To set the RELY property on a base table primary key:

Unique key derivation¶

Snowflake can automatically derive a reliable unique key from the query definition of a dynamic table. For example, the following SQL constructs produce derived unique keys:

- GROUP BY: The grouping columns form a unique key because each group produces exactly one output row.

- QUALIFY ROW_NUMBER() = 1: The partition-by columns form a unique key because the filter keeps exactly one row per partition.

- Reliable base table primary keys: The primary key of the base table is used as the unique key of the dynamic table.

Snowflake registers derived primary keys as unique constraints on the dynamic table. Because these constraints come from the query structure, they’re fully reliable without additional validation.

To check whether a dynamic table has a derived primary key, run:

When to use primary keys¶

Primary keys are useful in the following scenarios:

- Improve change tracking for INSERT OVERWRITE workloads

When a base table is periodically rewritten through INSERT OVERWRITE, Snowflake can’t use standard change-tracking columns to detect what changed. A primary key lets Snowflake compare rows by key value and process only the actual changes, avoiding a full recomputation of the dynamic table.

- Enable incremental refresh downstream of full refresh dynamic tables

Normally, a dynamic table in incremental refresh mode can’t be downstream of a dynamic table in full refresh mode. When the upstream full refresh dynamic table has a system-derived unique key, Snowflake can compute the changes between full refreshes, allowing downstream tables to refresh incrementally. This removes a major blocker for incremental pipelines.

- Reduce change propagation in pipelines

Primary keys enable value-based change reduction at each stage of a pipeline. Snowflake can filter out rows where the primary key exists in both the old and new versions with identical values, reducing the volume of changes that propagate to downstream tables.

Key behaviors¶

- Opt-in for downstream incremental refresh: To use incremental refresh on a dynamic table

that reads from a full refresh dynamic table with a derived unique key, you must explicitly set

REFRESH_MODE = INCREMENTALon the downstream table. SettingREFRESH_MODE = AUTOcontinues to resolve to FULL. - Verify primary key-based change tracking support: Use

SHOW UNIQUE KEYS IN <dt_name>to check whether a dynamic table has a derived unique key. Alternatively, create a downstream dynamic table withREFRESH_MODE = INCREMENTALand check whether the creation succeeds. - Masking policies: Masking policies that obfuscate primary key columns prevent Snowflake from using those keys for change tracking. In this case, Snowflake falls back to standard change-tracking columns.

Next steps¶

For examples and implementation guidance, see Use primary keys to optimize dynamic table pipelines and Tutorial: Use primary keys to optimize dynamic table pipelines.

For the full syntax of CREATE DYNAMIC TABLE, see CREATE DYNAMIC TABLE.

Use primary keys to optimize dynamic table pipelines¶

Snowflake can use primary keys to track row-level changes in dynamic tables without relying on change-tracking columns. This enables incremental refresh for pipelines that run insert overwrite workloads including full refresh dynamic tables, which normally block downstream incremental processing.

Primary keys are especially effective when an INSERT OVERWRITE is performed on a base table where only a small fraction of the data is actually changed. In these cases, primary key-based change tracking processes only the changed rows instead of recomputing the entire table. A primary key provides a stable row identifier that persists across overwrites.

For conceptual background, see When to use primary keys.

Improve performance for INSERT OVERWRITE workloads¶

When a base table is periodically rewritten through INSERT OVERWRITE, standard change-tracking columns are reset and a dynamic table consuming the base table will see a set of inserts and deletes for all rows in the base table.

In the following example, an external process rewrites the dimension_table periodically, but

most rows remain the same:

When the dimension table is rewritten through INSERT OVERWRITE, Snowflake uses the primary key to identify which dimension rows actually changed and refreshes only the affected facts, rather than recomputing the entire join.

Enable incremental refresh downstream of a full refresh dynamic table¶

Normally, a dynamic table with REFRESH_MODE = INCREMENTAL can’t read from a dynamic table

with REFRESH_MODE = FULL. When the full refresh dynamic table has a system-derived unique key,

you can explicitly set the refresh mode to INCREMENTAL.

Example: Use a base table primary key¶

Create a base table with a primary key and set the RELY property so Snowflake uses it

for row-level change tracking:

Create a full refresh dynamic table that reads from the base table. Because the base table has a reliable primary key, Snowflake can derive a unique key from the base table and register it as a unique constraint for the dynamic table:

Create an incremental dynamic table downstream. This works because the upstream table has a system-derived reliable unique key:

Example: Use a query-derived primary key¶

When a dynamic table’s query includes a GROUP BY clause, Snowflake automatically derives a unique key from the grouping columns. Downstream tables can use this derived key for primary key-based change tracking and enable incremental refreshes.

The daily_sales table has a derived unique key on (sale_day, product_id) because the

GROUP BY guarantees one row per combination. A downstream table can refresh incrementally:

Check system-derived unique keys on a dynamic table¶

To see whether a dynamic table has a derived unique key, use the SHOW UNIQUE KEYS command:

If the output contains a unique key, the dynamic table supports primary key-based change

tracking. Downstream dynamic tables can use REFRESH_MODE = INCREMENTAL to read from it,

even if it uses full refresh mode.

You can also verify support by creating a downstream dynamic table with

REFRESH_MODE = INCREMENTAL. If the upstream table doesn’t have a reliable unique key,

the creation fails with an error.

Tutorial: Use primary keys to optimize dynamic table pipelines¶

Introduction¶

This tutorial shows you how to use primary keys to enable efficient incremental refresh in a dynamic table pipeline where the base table is periodically rewritten through INSERT OVERWRITE. You’ll build two dimension-fact join pipelines with the same data and query definition, one with a primary key and one without, and compare how they handle a dimension table rewrite.

About dimension-fact joins with INSERT OVERWRITE¶

In many data pipelines, dimension tables are periodically rewritten by external processes using INSERT OVERWRITE. This is common with ETL connectors and batch data loads. The rewrite replaces all rows in the table, even when only a small fraction of the data actually changed.

Without primary keys, Snowflake can’t determine what changed across a rewrite and treats every row as new. This forces the pipeline to recompute everything in each refresh cycle.

When the dimension table has a primary key with the RELY property, Snowflake uses the key

to identify which rows actually changed between rewrites. The dynamic table then processes only

the affected rows, even though the underlying data was fully replaced.

What you’ll learn¶

In this tutorial, you’ll learn how to complete the following tasks:

- Create two dimension tables with the same data: one with a primary key and one without.

- Build two dynamic table pipelines with the same join query and compare their behavior.

- Simulate a dimension table rewrite with INSERT OVERWRITE where only 10% of rows change.

- Compare the incremental refresh performance of both dynamic tables on the same data.

Prerequisites¶

You need access to a Snowflake environment with the following resources:

- A warehouse for compute resources. Snowflake recommends using an x-small warehouse.

- The privileges required to create databases, schemas, and dynamic tables. For more information, see Access control privileges.

If you don’t have a user with the necessary permissions, ask someone who does to create one for you. Users with the ACCOUNTADMIN role can create new users and grant them the required privileges.

Note

For the best experience, complete this tutorial in Snowsight so that you can quickly view the query history and monitor your dynamic table performance.

Step 1: Create the source data¶

Start by setting up two dimension tables (one with a primary key, one without) and a shared fact table.

Create a database and schema for the tutorial:

Create a dimension table with a primary key and the RELY property. The RELY property tells

Snowflake that it can trust the primary key for optimizations such as change tracking:

Create a second dimension table with the same schema but no primary key:

Create a fact table for order transactions:

Insert sample data into the dimension table with a primary key. This generates 100,000 products across 10 categories:

Copy the same data into the dimension table without a primary key:

Insert sample order data. This generates 10 million orders that reference the products:

Step 2: Create dynamic tables for comparison¶

In this step, you will create two dynamic table pipelines with the same join query. The only difference is whether the dimension table has a primary key. This lets you directly compare their refresh performance on the same data.

Note

Replace my_warehouse with the name of your warehouse.

Pipeline with primary key¶

Create a dynamic table that joins the dimension table (with primary key) to the fact table. Because the dimension table has a reliable primary key, this dynamic table can use incremental refresh:

Pipeline without primary key¶

Create the same join query using the dimension table without a primary key. Without a primary key, Snowflake can’t track row-level changes across INSERT OVERWRITE rewrites, so this dynamic table recomputes the entire join on each refresh:

Refresh both pipelines¶

Perform the initial refresh for both dynamic tables to establish a baseline:

Step 3: Simulate a dimension table rewrite and compare¶

Now simulate the common scenario where an external process rewrites the dimension table through INSERT OVERWRITE with a small percentage of rows changed.

Rewrite the dimension tables¶

Rewrite both dimension tables with INSERT OVERWRITE. This updates the price for 10% of products (those in Category 01) while keeping the rest identical:

Even though every row was rewritten in both tables, only about 10,000 products (Category 01) actually have different values. The pipeline with a primary key can detect this; the pipeline without a primary key can’t.

Refresh and compare performance¶

Refresh both dynamic tables to pick up the changes:

Check the execution time and scan metrics:

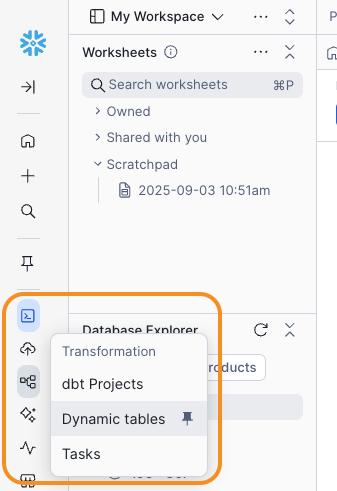

-

Navigate to Transformation » Dynamic Tables.

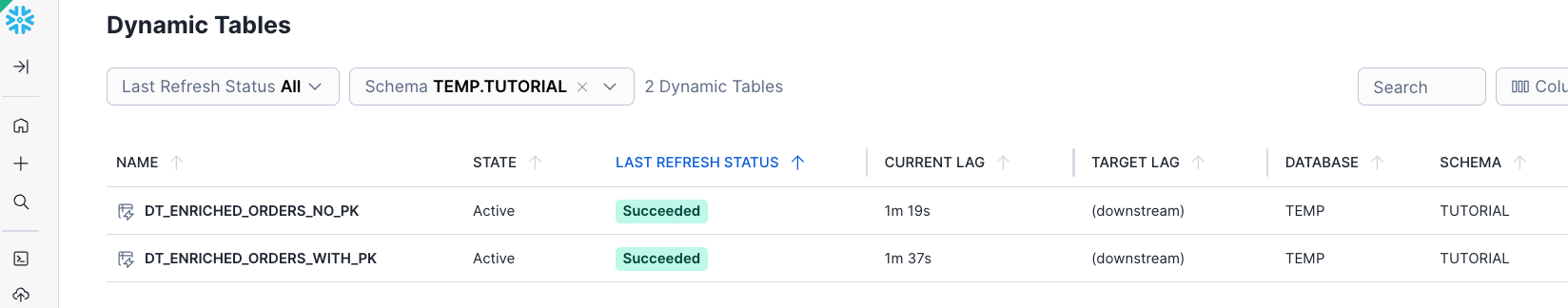

-

Filter the list by selecting the

tempdatabase, then selectdt_enriched_orders_no_pk.

-

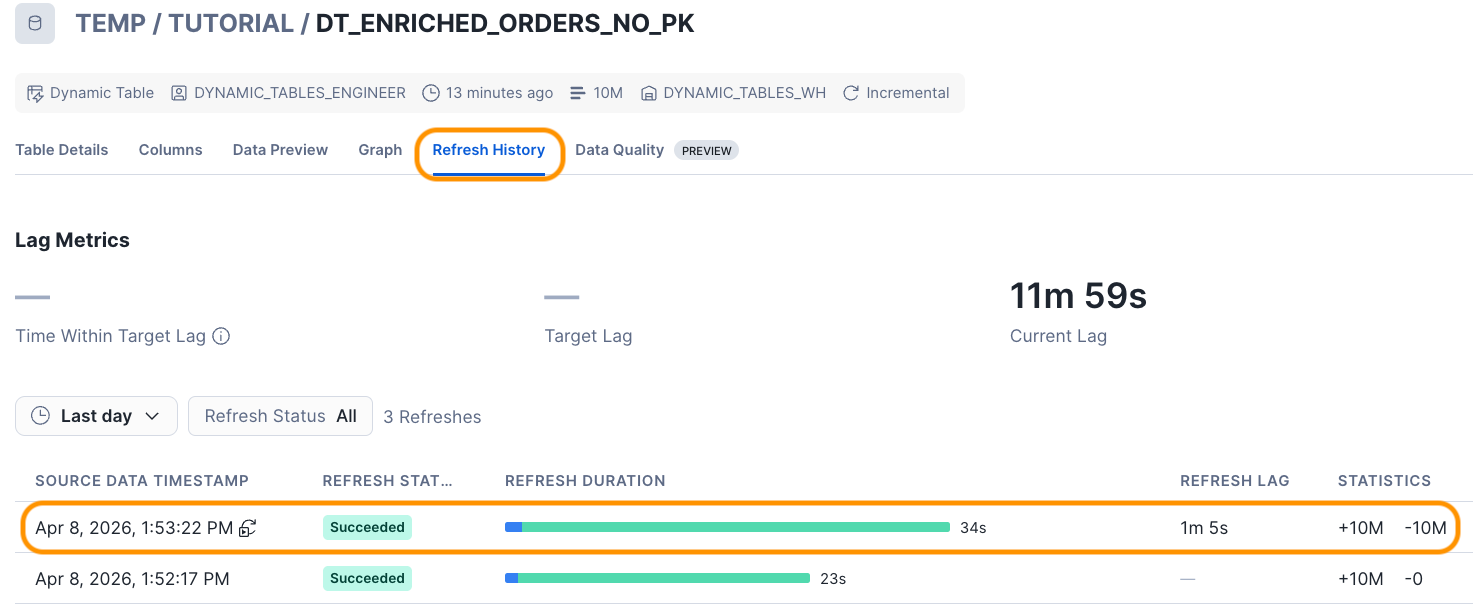

Select the Refresh History tab and notice the REFRESH DURATION value for the most recent refresh.

Because the dimension table has no primary key, Snowflake can’t distinguish changed rows from unchanged rows after the INSERT OVERWRITE. Every row in the rewritten table looks like a new insertion, so the engine treats all 100,000 dimension rows as changed and must re-join them against the entire 10-million-row fact table. This produces far more inserted and deleted rows and a much higher refresh duration, even though only 10% of the dimension data actually changed.

-

Now refresh the optimized

dt_enriched_orders_with_pkdynamic table: -

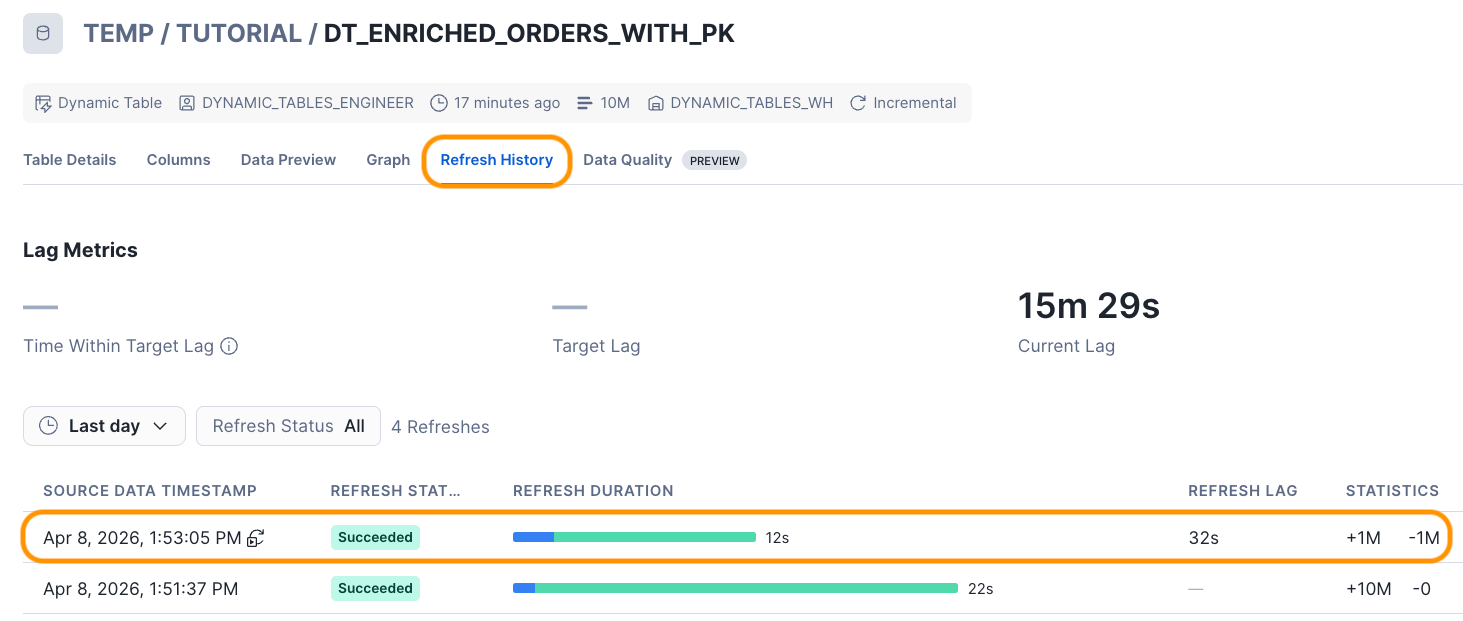

Repeat the previous steps to check the Refresh History for the optimized table:

Compare the two refresh operations. The optimized

dt_enriched_orders_with_pkdynamic table should complete significantly faster than the suboptimaldt_enriched_orders_no_pkdynamic table. In the example results, the suboptimal dynamic table took 34 seconds and updated 20 million rows in total, while the optimized table took only 12 seconds and updated only 2 million rows in total.

The results show the difference between the two approaches:

- dt_enriched_orders_with_pk: Uses the primary key to identify the ~10% of dimension rows

that actually changed, then processes only the orders that reference those products. The

rows_insertedandrows_deletedcounts reflect just the affected rows, and the refresh duration is significantly lower. - dt_enriched_orders_no_pk: Can’t determine what changed in the rewrite, so it reprocesses the entire 10-million-row join. The row counts and refresh duration are much higher.

Tip

The performance difference increases when a) the fact table grows and b) fewer dimension rows actually changed. In production pipelines where dimension tables contain millions of rows and only a small fraction changes on each load cycle, primary key-based change tracking can reduce refresh times by an order of magnitude.

Clean up¶

To delete all objects created for this tutorial, run the following DROP statement:

Summary and additional resources¶

In this tutorial, you used primary keys to enable an efficient incremental refresh in a dynamic table pipeline where the dimension table is periodically rewritten through INSERT OVERWRITE. By comparing two pipelines with the same data and query, you saw how a primary key lets Snowflake identify only the rows that actually changed and process just those changes through the join.

Along the way, you completed the following tasks:

- Created two dimension tables with the same data: one with a primary key (

RELY) and one without. - Built two dynamic table pipelines with the same join query and compared their behavior after an INSERT OVERWRITE rewrite.

- Simulated a dimension table rewrite with INSERT OVERWRITE, changing 10% of the rows, and compared the refresh performance of both pipelines.

Key concepts demonstrated:

- Primary key-based change tracking: When a base table has a primary key with

RELY, Snowflake uses it to compute row-level changes across rewrites. - INSERT OVERWRITE compatibility: Primary keys solve the change-tracking gap that occurs when tables are fully replaced. Without a primary key, Snowflake treats every row as changed.

For more information about dynamic tables and optimization techniques, explore the following resources:

- Optimize queries for incremental refresh – Query optimization for incremental refresh.

- Understanding immutability constraints – Use immutability constraints for historical data.

- Monitor dynamic tables – Monitor dynamic table performance.

- Understanding dynamic table initialization and refresh – Understand refresh modes and scheduling.