JDBC data sources for Snowpark Connect for Spark¶

This section provides a guide and sample code for reading data from and writing data to external databases (such as MySQL and PostgreSQL) using the Snowpark Connect for Spark JDBC data source feature. It covers both client-side and Snowflake Notebook setup.

Supported data sources¶

SQL Server

MySQL

PostgreSQL

Neo4j

Derby

Client-side setup (MySQL)¶

This setup is required when running Snowpark Connect from a local client application, such as a Python script or IDE.

Prerequisites¶

Java Runtime Environment (JRE) / Java Development Kit (JDK):

Install a JRE or JDK. The architecture (for example, 64-bit) of your Java installation must match the architecture of your Python installation.

Example source for installation: Adoptium Temurin Releases (if using Java 11).

Set JAVA_HOME Environment Variable:

Configure the

JAVA_HOMEenvironment variable to point to the root directory of your Java installation.Example (macOS/Linux):

Set CLASSPATH Environment Variable:

Add the path to your specific database’s JDBC driver

.jarfile to theCLASSPATHenvironment variable. This allows the Java environment to find the necessary driver.Example (for MySQL driver):

Sample client code (read from MySQL)¶

This example demonstrates how to read a table from a MySQL database

using spark_session.read.jdbc().

Sample client code (write to MySQL)¶

This example demonstrates how to write data into a MySQL database using

spark_session.write.jdbc().

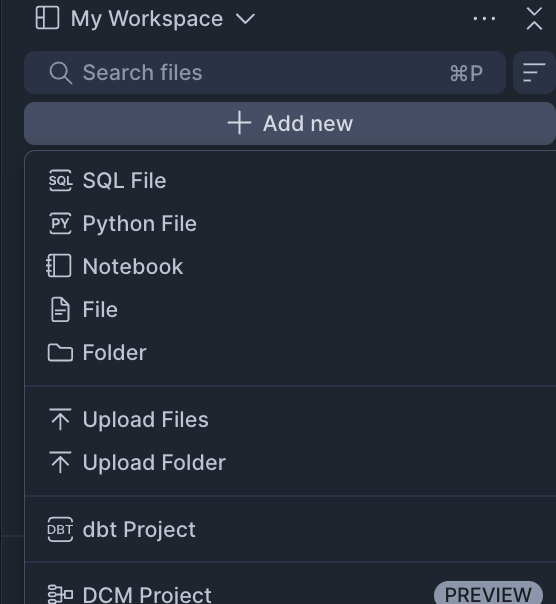

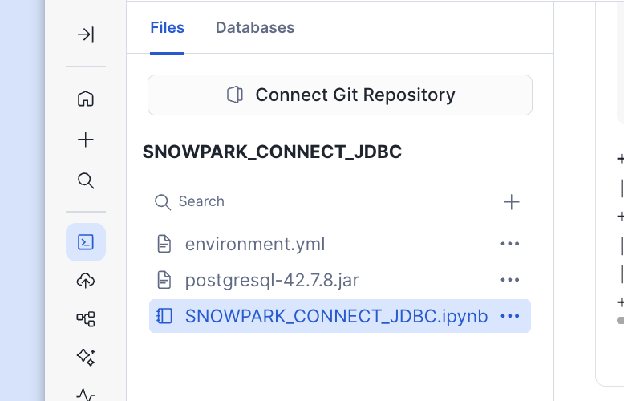

Snowflake Workspace Notebook setup (PostgreSQL)¶

This setup is used when running Snowpark Connect directly within a Snowflake Workspace Notebook environment.

Setup steps¶

The

snowpark-connectpackage is included in Workspace Notebook by default.Download and Upload JDBC Driver:

Download the appropriate JDBC driver

.jarfile for your external database (for example, PostgreSQL JDBC Driver).Upload the downloaded

.jarfile directly into your notebook environment.

Create External Integration:

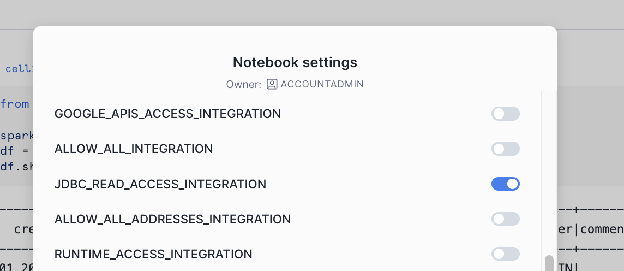

Activate External Integrations (Network Rule & Integration):

Snowflake requires an External Access Integration to allow the notebook to communicate with external network locations. You must define a Network Rule for the host and port of your external database.

Sample Workspace Notebook code (read from PostgreSQL)¶

This example shows the necessary Python code to initialize the session, load the driver, and read data from PostgreSQL.

Sample Workspace Notebook code (write to PostgreSQL)¶

This example shows the necessary Python code to initialize the session, load the driver, and write data into PostgreSQL.

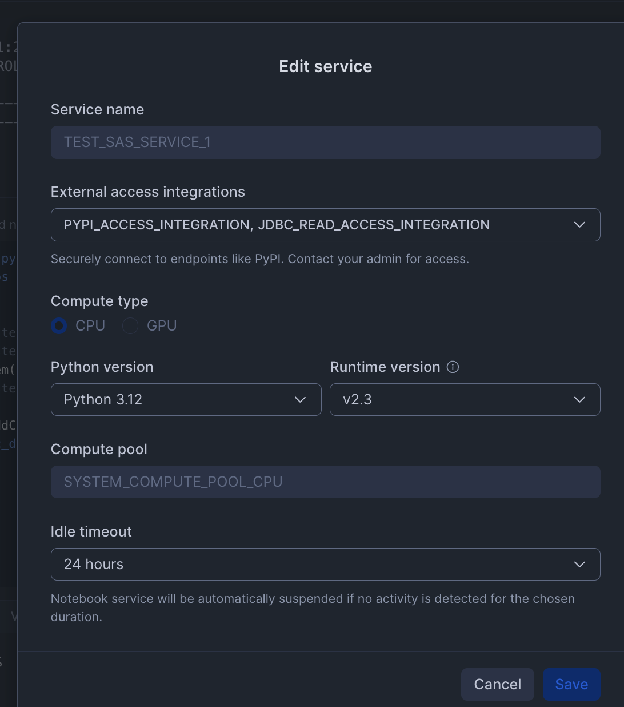

Snowflake Warehouse Notebook setup (PostgreSQL)¶

This setup is used when running Snowpark Connect directly within a Snowflake Notebook environment.

Setup steps¶

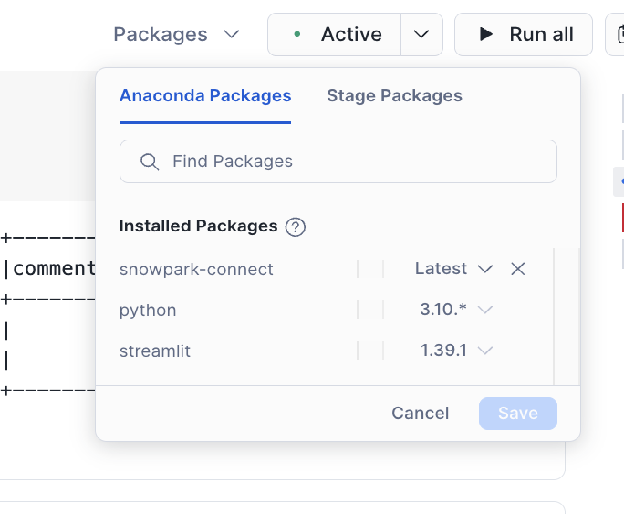

Add the snowpark-connect package:

Ensure the

snowflake-snowpark-connectpackage is added to your notebook environment.

Download and Upload JDBC Driver:

Download the appropriate JDBC driver

.jarfile for your external database (for example, PostgreSQL JDBC Driver).Upload the downloaded

.jarfile directly into your notebook environment.

Activate External Integrations (Network Rule & Integration):

Snowflake requires an External Access Integration to allow the notebook to communicate with external network locations. You must define a Network Rule for the host and port of your external database.

Sample Warehouse Notebook code (read from PostgreSQL)¶

This example shows the necessary Python code to initialize the session, load the driver, and read data from PostgreSQL.

Sample Warehouse Notebook code (write to PostgreSQL)¶

This example shows the necessary Python code to initialize the session, load the driver, and write data into PostgreSQL.

Client-side setup (Neo4j)¶

Snowpark Connect for Spark supports reading and writing data from Neo4j graph databases using the org.neo4j.spark.DataSource

format. This section covers client-side setup.

Prerequisites¶

Running Neo4j instance:

You need a running Neo4j instance accessible over the Bolt protocol (port 7687).

Neo4j JDBC driver:

Download the

full-bundleJAR from Maven Central (https://search.maven.org/artifact/org.neo4j/neo4j-jdbc-full-bundle) (for example,neo4j-jdbc-full-bundle-6.10.2.jar).Configure spark.jars:

Point to the local path of the downloaded JAR when creating the Spark session:

Sample client code (read from Neo4j)¶

Sample client code (write to Neo4j)¶

Snowflake Notebook setup¶

To connect to Neo4j from a Snowflake Notebook (Warehouse or Workspace), follow the same Network Rule and

External Access Integration steps described in the PostgreSQL sections above. Use the Neo4j Bolt port

(typically 7687) in your Network Rule, upload the Neo4j JDBC full-bundle JAR, and load it with

spark.jars configuration. The read and write code is identical to the client-side examples.

Supported configurations¶

The following options are available when using the org.neo4j.spark.DataSource format:

Option |

Description |

Required |

|---|---|---|

|

Neo4j connection URL (for example, |

Yes |

|

Neo4j username. |

Yes |

|

Neo4j password. |

Yes |

|

Node label to read or write (for example, |

Required for writes. One of |

|

Custom Cypher query to execute on read. |

One of |

|

Relationship type to read (for example, |

One of |

Supported URL formats:

Format |

Description |

|---|---|

|

Standard Bolt protocol (standalone instances). |

|

Bolt with TLS encryption. |

|

Neo4j protocol with routing (clusters). |

|

Neo4j protocol with TLS and routing. |

Note

For standalone Neo4j instances, use bolt://. The neo4j:// protocol requires a Neo4j cluster

with routing capabilities.